Most product teams measure customer experience only when something goes wrong — a support spike, a churn surge, a bad NPS cycle. By then you're already behind. Customer experience metrics give you leading indicators: early signals that a user is struggling, thriving, or about to leave before revenue takes the hit. This guide covers the 10 metrics that consistently predict retention and growth, with exact formulas, industry benchmarks, and step-by-step setup for Amplitude, Mixpanel, PostHog, GA4, and Stripe.

Key Takeaways

- CSAT and CES measure transactional satisfaction; NPS measures relationship health — you need both to get a complete picture.

- Churn rate and retention rate are two sides of the same coin; track both because they reveal different failure modes.

- Calculate Customer Lifetime Value per segment, not as a single company average — the per-channel view is what actually informs acquisition and pricing decisions.

- First Response Time and Average Resolution Time are lagging support indicators — pair them with CES scores to understand whether speed or effort is the real problem.

- An engagement score combining feature breadth, session frequency, and depth actions predicts retention more reliably than any single metric.

What Customer Experience Metrics Actually Measure

Customer experience metrics fall into three categories: perception metrics (what users feel), behavioral metrics (what users do), and outcome metrics (what results from both). Each category catches different failure modes — and each requires different instrumentation.

Perception vs. Behavioral vs. Outcome Metrics

Perception metrics — CSAT, NPS, CES — are survey-based signals of how users feel at a moment in time. They're high-signal but low-frequency and subject to response bias. Behavioral metrics — engagement score, feature adoption, session depth — come from your product instrumentation and reflect what users actually do, not what they say they do. Outcome metrics — churn rate, retention rate, CLV — are the downstream results that perception and behavior jointly produce. A healthy CX stack tracks all three: perceptions explain the 'why', behaviors show the 'what', and outcomes measure the 'so what'.

When to Use Each Metric Type

Use perception metrics after key journey moments: onboarding completion, first value delivery, support ticket resolution. Use behavioral metrics continuously — instrument them once and let dashboards surface trends automatically. Use outcome metrics monthly or quarterly for cohort analysis and board reporting. The trap most teams fall into is measuring outcomes weekly (too noisy) and perception metrics never (too manual). Flip that cadence.

Product Analyst

Still digging through dashboards?

Ask any product data question and get answers in seconds — no SQL, no waiting.

Try Product Analyst — Free

Customer Experience Metrics for Satisfaction: CSAT, NPS, and CES

These three perception metrics sit at the top of every CX stack. They're easy to collect badly and surprisingly hard to collect well — survey timing and targeting matter as much as the questions themselves.

CSAT: Customer Satisfaction Score

CSAT measures user satisfaction with a specific interaction — a support ticket, a feature, an onboarding flow. Formula: (Number of satisfied responses / Total responses) × 100, where 'satisfied' means 4 or 5 on a 5-point scale. Industry benchmark for SaaS: 75–85%; below 70% warrants immediate investigation. Collect CSAT in PostHog using Surveys triggered on specific events like support_ticket_resolved or onboarding_completed. In Amplitude, fire a custom event csat_response with a score property and build a distribution chart in Events Explorer to track the percentage scoring 4–5.

// PostHog: Trigger CSAT survey after a key product event

posthog.capture('onboarding_completed', {

user_type: 'trial',

plan: 'pro'

});

// Then in PostHog UI:

// Surveys → Create → Trigger on event 'onboarding_completed'

// Set display delay: 0s (trigger immediately after event)NPS: Net Promoter Score

NPS measures relationship health, not transactional satisfaction. Formula: % Promoters (scores 9–10) − % Detractors (scores 0–6). Score ranges: below 0 = critical, 0–30 = okay, 30–70 = good, 70+ = excellent. B2B SaaS median sits around 35–40 (Satmetrix 2023 Global NPS Benchmarks). Collect NPS on a 90-day rolling window per user — more frequent than that creates survey fatigue. In Mixpanel, use mixpanel.track('nps_response', { score: 8, segment: 'enterprise', days_since_signup: 90 }) and build an Insights report calculating promoter minus detractor percentage. Segment NPS by plan tier and acquisition channel — aggregate NPS hides which cohorts are at risk.

CES: Customer Effort Score

CES measures how much effort a user had to expend to complete a task. Lower effort correlates strongly with repurchase and recommendation intent — more so than satisfaction alone (CEB research). Formula: Average of all effort ratings on a 1–7 scale (1 = very low effort, 7 = very high effort). A score below 3.0 is strong; above 4.5 signals friction worth fixing. CES is most powerful immediately after support ticket resolution and new feature rollouts. In Amplitude, fire ces_response with {task: 'upgrade_plan', score: 2, entry_point: 'billing_page'} and monitor the rolling p50 and p75 averages.

// Amplitude: Track CES after completing a critical task

amplitude.track('ces_response', {

task: 'upgrade_plan',

score: 2, // 1 (very low effort) to 7 (very high effort)

entry_point: 'billing_page',

support_interaction: false

});Retention and Revenue Metrics: Churn Rate, Retention Rate, and CLV

These are the outcome metrics that CX programs ultimately move. Track all three by cohort — aggregate numbers hide the segments where you're winning or losing.

Churn Rate

Formula: (Customers lost in period / Customers at start of period) × 100. For SaaS, always distinguish logo churn from revenue churn — losing five small accounts while retaining one enterprise account produces high logo churn with low revenue impact. Benchmarks: 2–5% monthly for B2C SaaS, 0.5–2% monthly for B2B SaaS (Recurly 2023 Subscription Economy Index). In Stripe, use the Dashboard's MRR movement report or filter subscriptions by status: 'canceled' via the API. In Amplitude, the Retention Analysis chart with 'did not perform' logic catches behavioral churn — users who stopped engaging — before invoice cancellation appears in Stripe.

// Stripe API: Fetch subscriptions canceled in a date range

const canceledSubs = await stripe.subscriptions.list({

status: 'canceled',

created: {

gte: Math.floor(new Date('2024-01-01').getTime() / 1000),

lte: Math.floor(new Date('2024-01-31').getTime() / 1000)

},

limit: 100,

expand: ['data.customer']

});Retention Rate

Retention rate is the inverse of churn: Formula: ((Customers at end of period − New customers acquired) / Customers at start of period) × 100. A 95% monthly retention rate compounds to only 54% annual retention — a level that makes sustainable growth structurally difficult without constant top-of-funnel spending. Target 95%+ monthly for B2B SaaS. In Amplitude, define 'retained' as your activation event or core value action — not just any session — to avoid inflating retention with zombie logins. In Mixpanel, Retention Reports support both 'any event' and 'specific event' modes; use the second to measure retention against your activation criterion.

Customer Lifetime Value (CLV)

CLV is the total revenue expected from a customer over their entire relationship. Simple formula: ARPU / Monthly Churn Rate. At $200 ARPU and 2% monthly churn, CLV = $10,000. For margin-adjusted CLV: ARPU × Gross Margin % × (1 / Monthly Churn Rate). Calculate CLV by acquisition channel and plan tier — a $50/month organic customer churning at 1% monthly ($5,000 CLV at 100% margin) often outperforms a $150/month paid-acquisition customer churning at 4% monthly ($3,750 CLV at 100% margin). Pull ARPU cohorts from Stripe Revenue Recognition reports, then cross-reference with behavioral cohorts in PostHog using Group Analytics to attach subscription data to user segments.

Performance Metrics for Customer Service: FRT and ART

First Response Time and Average Resolution Time are the two performance metrics for customer service that most directly drive CES and CSAT scores. Both are fully measurable and highly actionable with the right instrumentation.

First Response Time (FRT)

FRT measures elapsed time from ticket creation to first agent reply. Formula: Sum of all first response times / Total tickets in period. Benchmarks vary by channel: email < 1 hour (Zendesk 2023 CX Trends Report), live chat < 5 minutes, social < 30 minutes. Teams that consistently miss FRT benchmarks score measurably lower on CSAT within 30 days — the correlation is strong enough to use FRT as a leading CSAT indicator. Pipe FRT from Zendesk or Intercom into Amplitude as a support_first_response event with {hours: 0.75, channel: 'email', plan: 'pro'}. Monitor at p50/p75/p90 percentiles rather than just the average.

Average Resolution Time (ART)

ART measures total elapsed time from ticket open to resolved. Formula: Sum of resolution times / Number of resolved tickets. Benchmark: < 24 hours for email support in SaaS. Segment ART by issue type, plan tier, and support tier — enterprise customers with SLAs have very different baselines than self-serve users. Pair ART with CES: if ART is improving but CES is flat, the problem isn't speed — it's interaction quality (excessive back-and-forth, unclear answers). Log resolution data in GA4 with gtag('event', 'ticket_resolved', {resolution_hours: 3.5, category: 'billing', plan: 'enterprise'}) and analyze in Explorations with a custom metric on resolution hours.

// GA4: Log ticket resolution with timing and context

gtag('event', 'ticket_resolved', {

resolution_hours: 3.5,

first_response_hours: 0.4,

category: 'billing',

plan: 'enterprise',

message_count: 4 // back-and-forth volume

});Growth Metrics: Referral Rate and Engagement Score

Referral rate and engagement score are the most forward-looking CX indicators — they predict expansion revenue and retention before either shows up in outcome metrics. They're also the hardest to instrument correctly.

Referral Rate

Referral rate measures the percentage of new customers acquired through existing customer referrals. Formula: (New customers from referral / Total new customers acquired) × 100. A healthy referral rate for B2B SaaS is 10–20%; consumer apps with strong network effects can reach 30–40%. Track referral source at signup with a ref query parameter and persist it as a user property on day one. In Mixpanel, use mixpanel.people.set({ acquisition_channel: 'referral', referrer_id: 'user_123' }) at signup. In Amplitude, set acquisition_source: 'referral' as a user property and build a funnel from signup_completed to first_value_event segmented by acquisition source — referral cohorts typically activate 15–25% faster than paid cohorts.

Engagement Score

An engagement score is a composite metric combining multiple behavioral signals into a single number that predicts retention more reliably than any individual event. A proven weighting model: score = (0.3 × normalized_frequency) + (0.3 × normalized_feature_breadth) + (0.4 × normalized_depth_actions), where depth actions are your highest-value behaviors (exporting, collaborating, creating automations). In Amplitude, use Computed Properties to calculate per-user engagement scores in real time. In PostHog, build a custom query in the SQL Editor joining 30-day event counts per user into a weighted score. Users dropping below a threshold (e.g., score < 40 out of 100) should trigger an automated re-engagement workflow via your CRM or email platform.

// Amplitude: Track depth events that feed the engagement score

amplitude.track('engagement_depth_action', {

action_type: 'export_report', // the specific depth behavior

feature_area: 'analytics',

user_plan: 'pro',

weekly_session_count: 4 // pass session context for score weighting

});How to Monitor Customer Experience Across Your Analytics Stack

Tracking individual cx measures in isolation is straightforward. The hard part is connecting them into a coherent view — tying a CSAT drop to a specific product change, or linking a CES spike to a support process failure.

Routing Survey Data Into Product Analytics

Pipe NPS, CSAT, and CES survey responses into your product analytics tool as custom events. This unlocks the most actionable analysis in CX: correlating perception scores with behavioral cohorts in the same query. In Amplitude, use the HTTP API to ingest survey webhooks: POST https://api2.amplitude.com/2/httpapi with the score as an event property alongside existing user properties. In Mixpanel, the Import API accepts webhook payloads from Typeform, Delighted, or Survicate with minimal transformation. In PostHog, Surveys are native — responses automatically become events queryable alongside product behavior data.

Building a CX Metrics Dashboard That Teams Actually Use

A useful CX dashboard has three layers: current state (CSAT, NPS, active churn rate this month), trend (30/60/90-day rolling averages), and leading indicators (engagement score distribution, FRT percentiles, CES by feature area). In Amplitude, use Dashboards with shared charts and Markdown cards for context. In PostHog, Dashboards support pinned insights embeddable in Notion or Confluence via share link. Build separate dashboards for product teams (behavioral metrics), support teams (FRT/ART/CES), and leadership (NPS/churn/CLV) — one mega-dashboard serves no one well.

Setting Up Proactive Alerts for CX Metric Drops

Dashboards are lagging — you see the problem after it's already visible. Set up metric alerts so drops surface before they compound. In Amplitude, use the Monitor feature on any chart (retention, funnel, custom metric) to trigger Slack alerts when a metric crosses a threshold. In PostHog, Alerts on Insights support both absolute thresholds and percentage-change triggers with Slack or email destinations. Set thresholds at 1.5× standard deviation from your 30-day baseline rather than fixed numbers — this accounts for seasonality without requiring constant manual recalibration.

Frequently Asked Questions

What is the difference between CSAT and NPS?

CSAT measures satisfaction with a specific interaction — a support ticket, a feature, a purchase. NPS measures overall relationship sentiment and likelihood to recommend your product. Use CSAT to diagnose specific touchpoints and NPS to track the health of the overall customer relationship over time.

What are good benchmark numbers for customer experience metrics in SaaS?

For B2B SaaS: NPS 30–50 is good, 50+ is excellent. CSAT 75–85% is typical; below 70% warrants action. Monthly churn 0.5–2% is healthy. CES below 3.0 on a 7-point scale indicates low friction. FRT under 1 hour for email support is the Zendesk industry standard for customer service performance metrics.

How do you track customer experience metrics in Amplitude?

Fire custom events for survey responses (e.g., nps_response with a score property), use Retention Analysis with a behavioral trigger event for retention metrics, use Computed Properties for engagement scores, and set up Monitors to alert on metric drops. Amplitude's core advantage is joining perception data (survey events) with behavioral cohort data in the same analysis.

What is a customer engagement score and how is it calculated?

A customer engagement score is a composite metric combining session frequency, feature breadth (number of distinct features used in 30 days), depth actions (high-value behaviors like exporting or collaborating), and recency into a single number. Depth actions typically carry the highest weight (40%) because they correlate most strongly with renewal intent.

How do you measure Customer Effort Score (CES)?

Collect CES via a survey immediately after a key task completes: 'How much effort did it take to complete this task?' on a 1–7 scale (1 = very low effort). Calculate the average across all responses. CES below 3.0 is strong; above 4.5 signals meaningful friction. Trigger the survey on a product completion event rather than a time delay — context-triggered surveys produce significantly higher-quality responses.

Wrapping Up

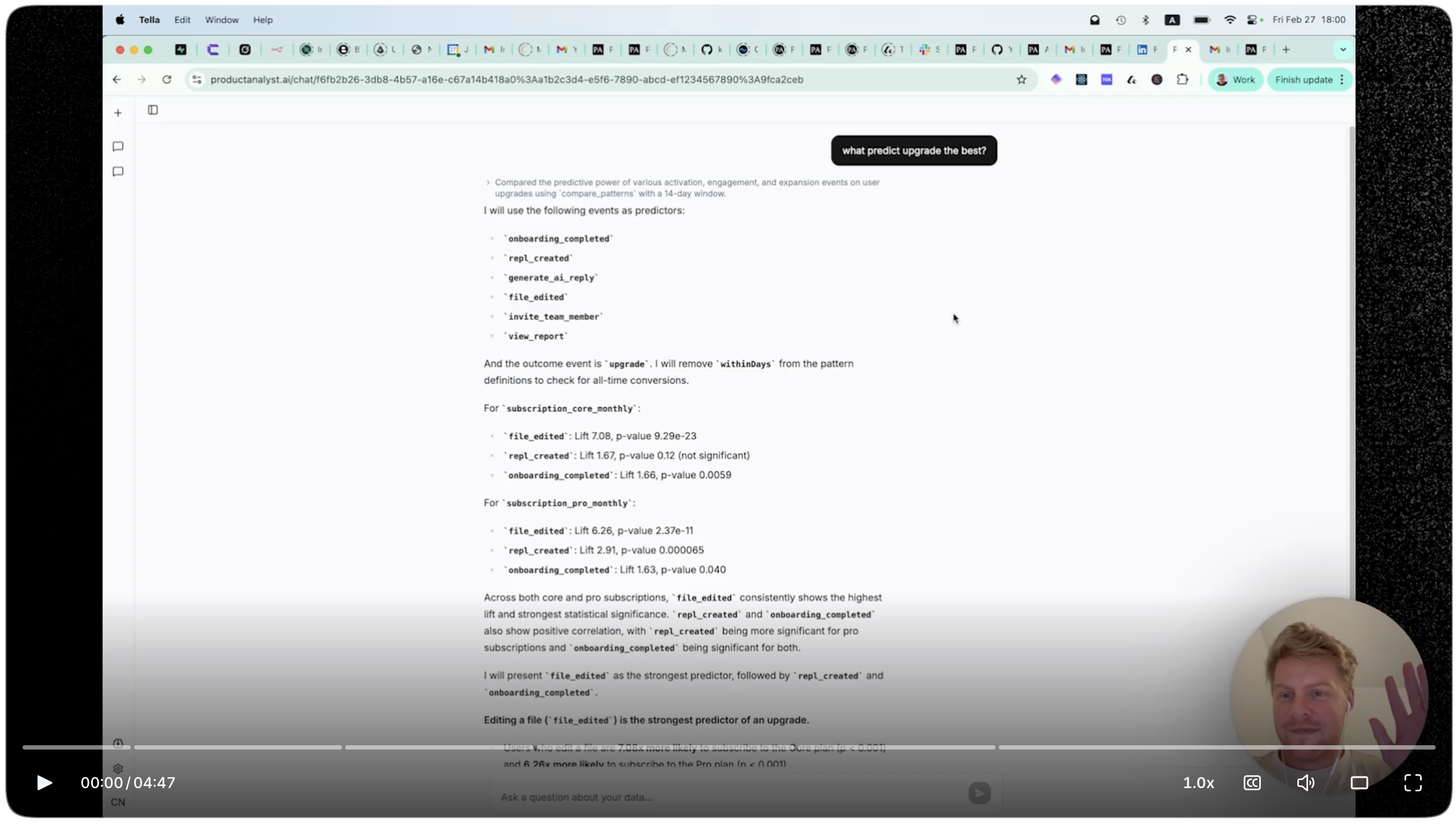

Customer experience metrics only earn their keep when they're connected to action: a CES spike should trigger a UX review, an NPS drop should surface in sprint planning, and a churn rate trend should inform your retention roadmap. Start with the three core perception metrics — CSAT, NPS, CES — instrumented on your highest-stakes user moments, add behavioral retention and an engagement score, then build toward a unified view where survey data and product analytics live in the same tool. If you want to track customer experience metrics automatically across tools, productanalyst.ai can help.